SciELO - Brasil - Concordância entre avaliadores na seleção de artigos em revisões sistemáticas Concordância entre avaliadores na seleção de artigos em revisões sistemáticas

PDF) Measuring agreement of administrative data with chart data using prevalence unadjusted and adjusted kappa

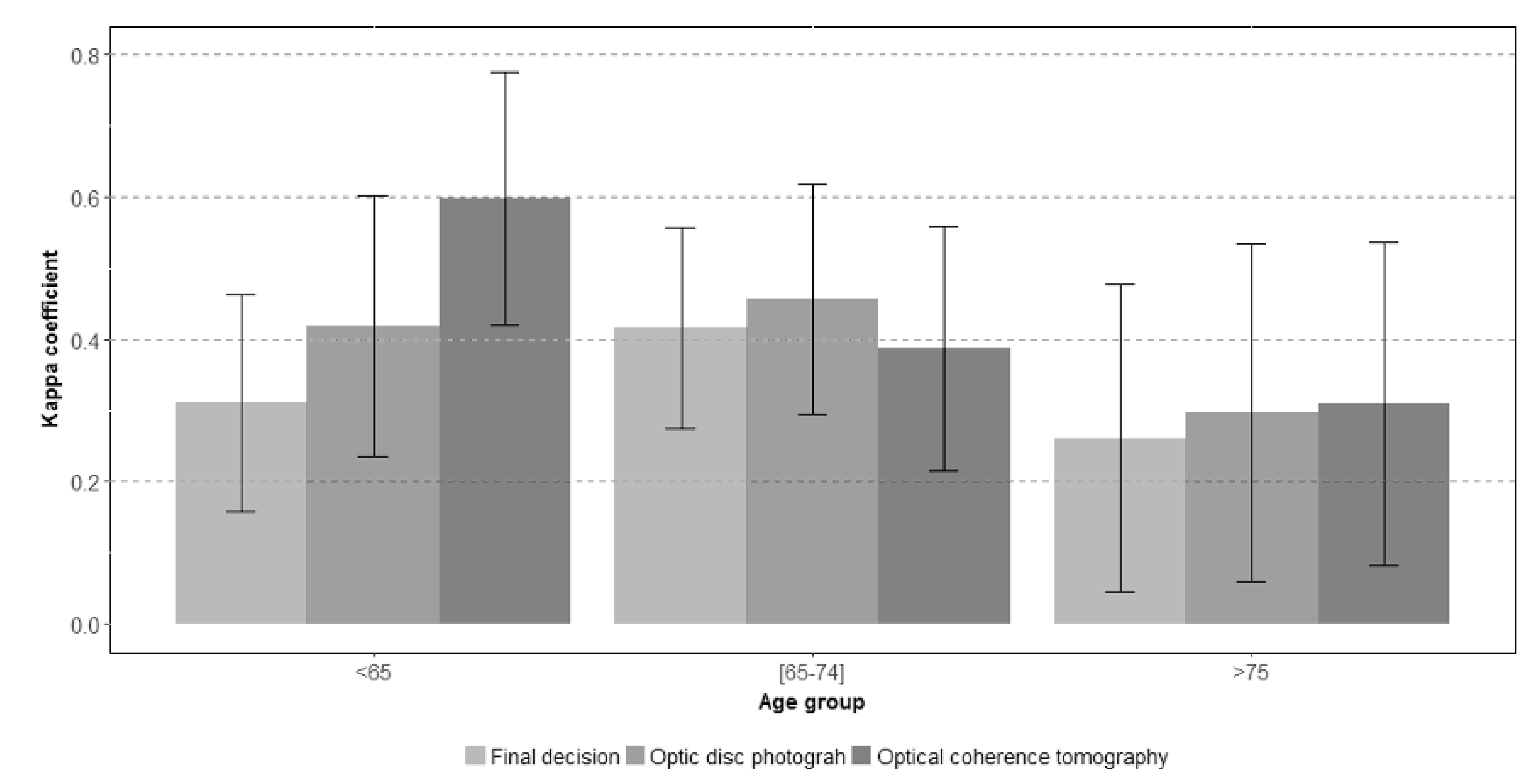

JCM | Free Full-Text | Interobserver and Intertest Agreement in Telemedicine Glaucoma Screening with Optic Disk Photos and Optical Coherence Tomography

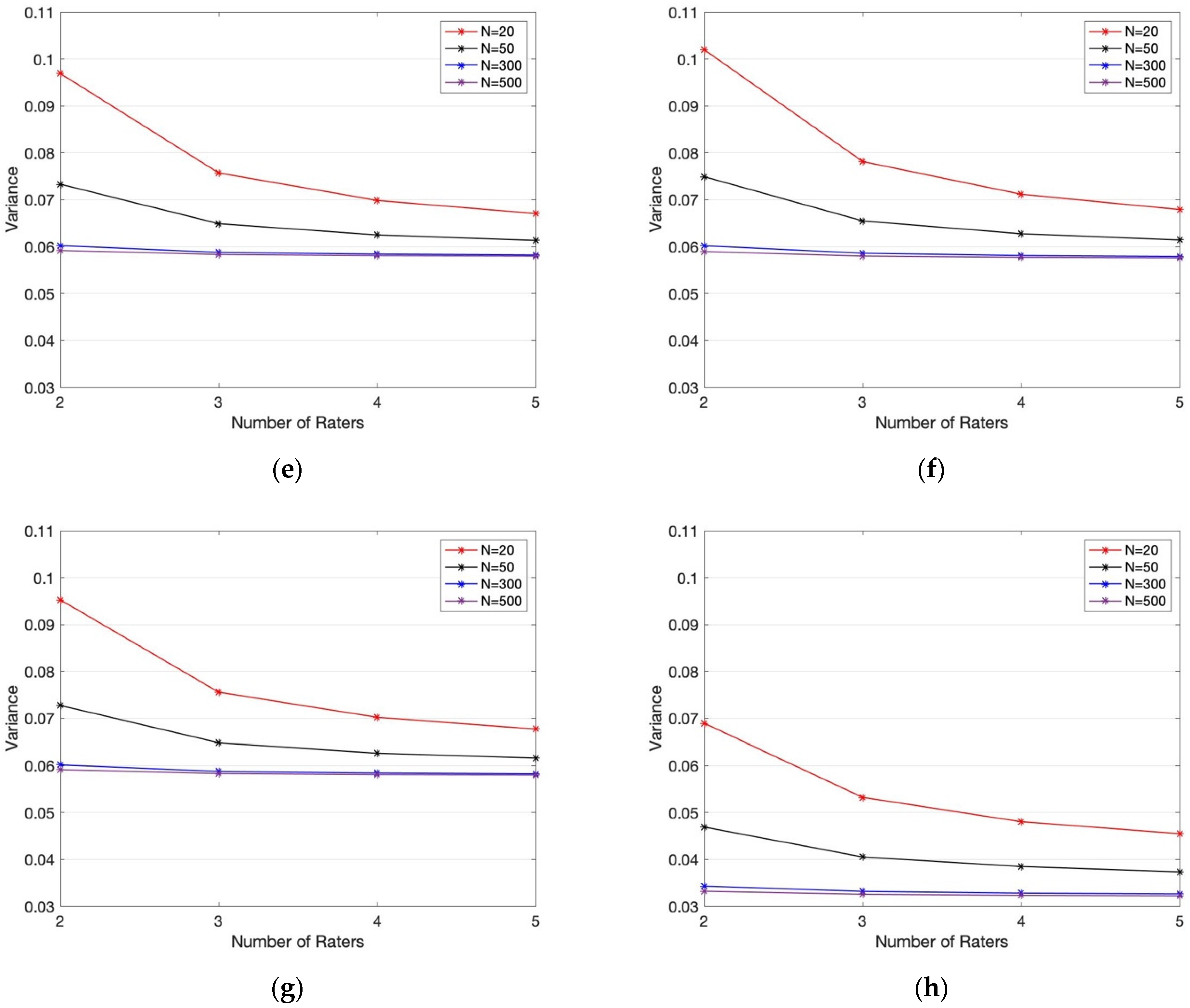

Symmetry | Free Full-Text | An Empirical Comparative Assessment of Inter-Rater Agreement of Binary Outcomes and Multiple Raters

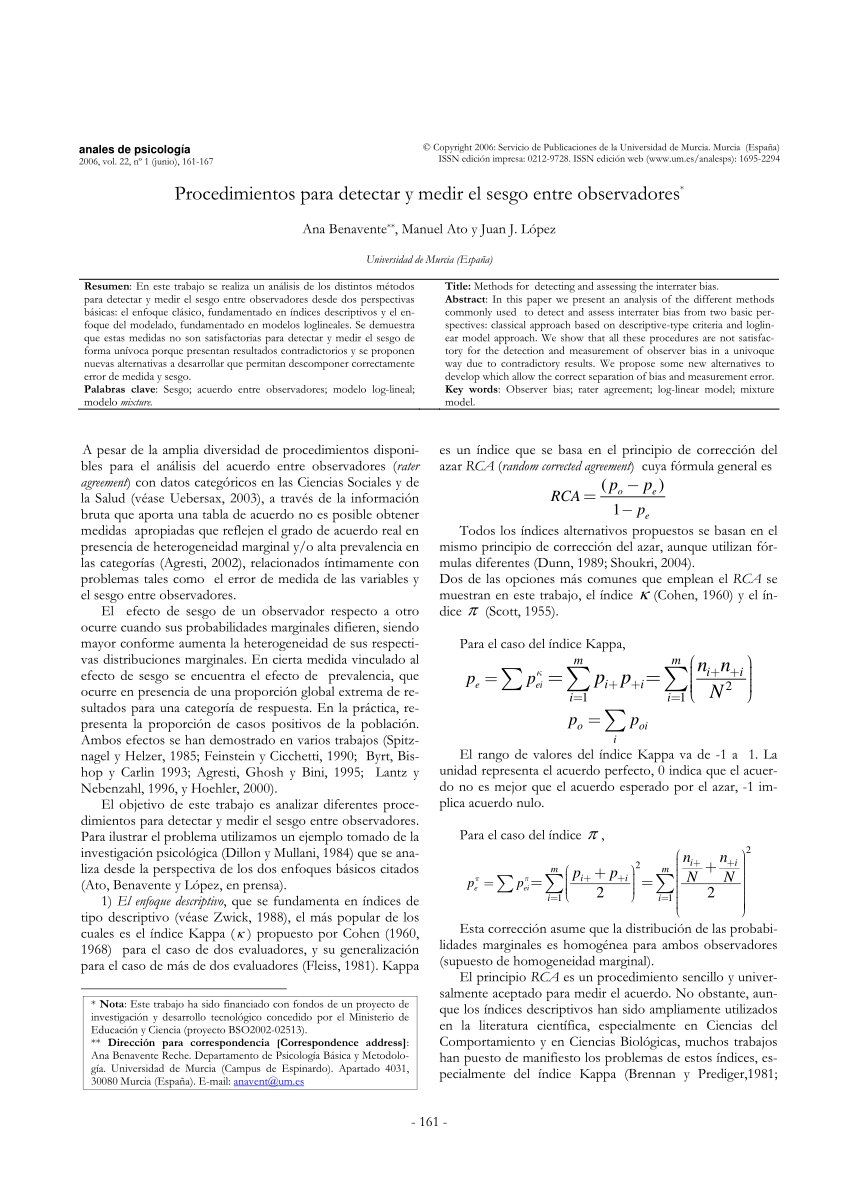

![PDF) Análisis comparativo de tres enfoques para evaluar el acuerdo entre observadores [Comparative analysis of three approaches for rater agreement] PDF) Análisis comparativo de tres enfoques para evaluar el acuerdo entre observadores [Comparative analysis of three approaches for rater agreement]](https://i1.rgstatic.net/publication/39269699_Analisis_comparativo_de_tres_enfoques_para_evaluar_el_acuerdo_entre_observadores_Comparative_analysis_of_three_approaches_for_rater_agreement/links/0deec52b02b108998d000000/largepreview.png)

PDF) Análisis comparativo de tres enfoques para evaluar el acuerdo entre observadores [Comparative analysis of three approaches for rater agreement]

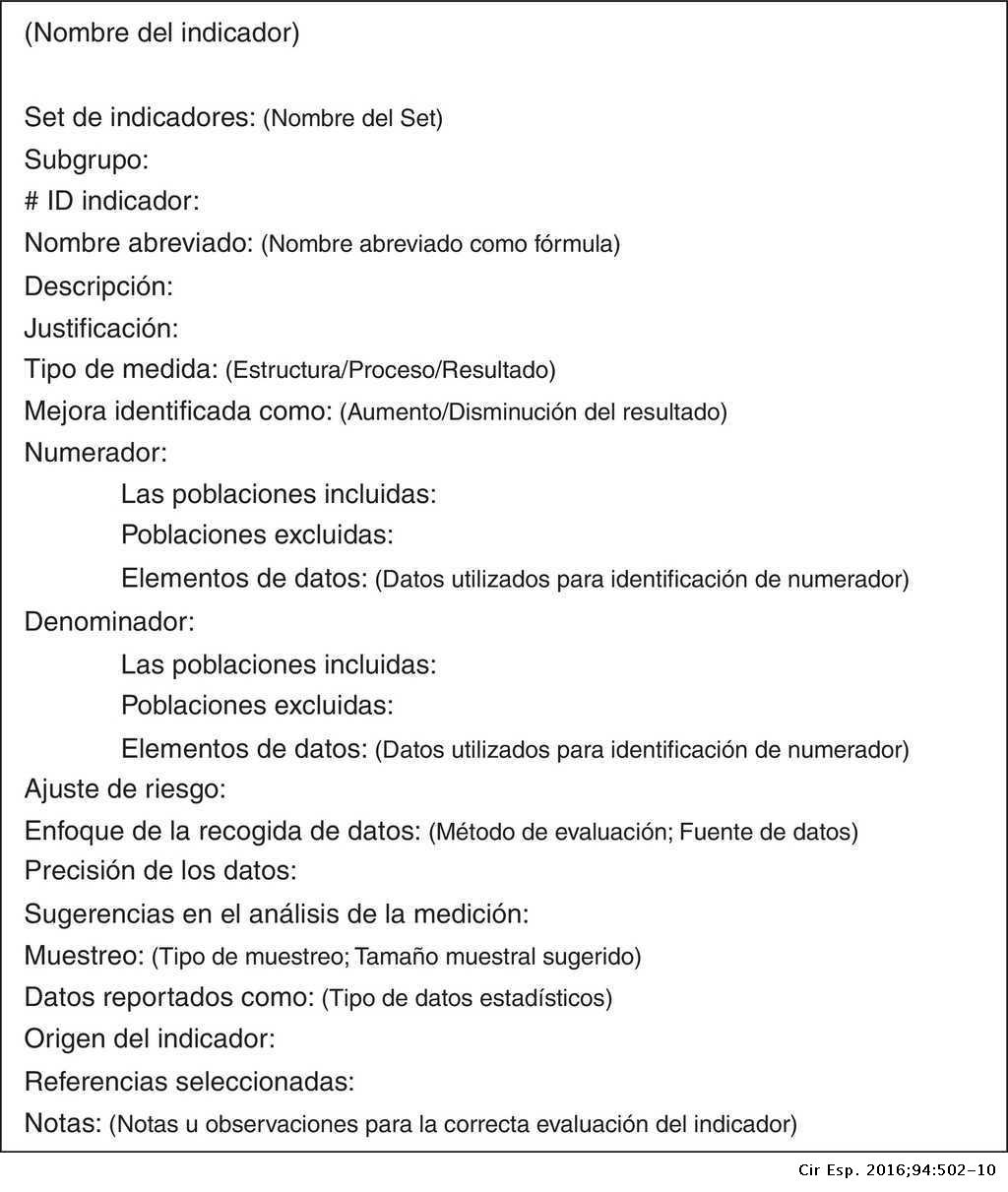

Desarrollo y estudio piloto de un conjunto esencial de indicadores para los servicios de cirugía general | Cirugía Española

Symmetry | Free Full-Text | An Empirical Comparative Assessment of Inter-Rater Agreement of Binary Outcomes and Multiple Raters

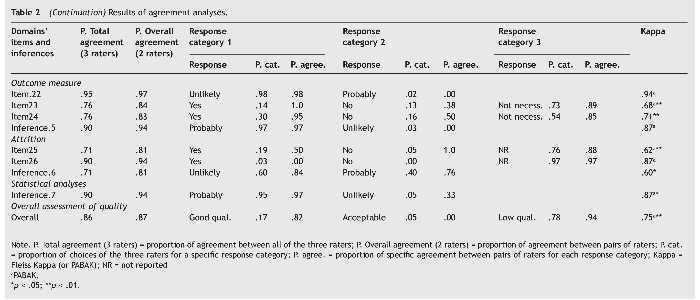

Q-Coh: A tool to screen the methodological quality of cohort studies in systematic reviews and meta-analyses | International Journal of Clinical and Health Psychology

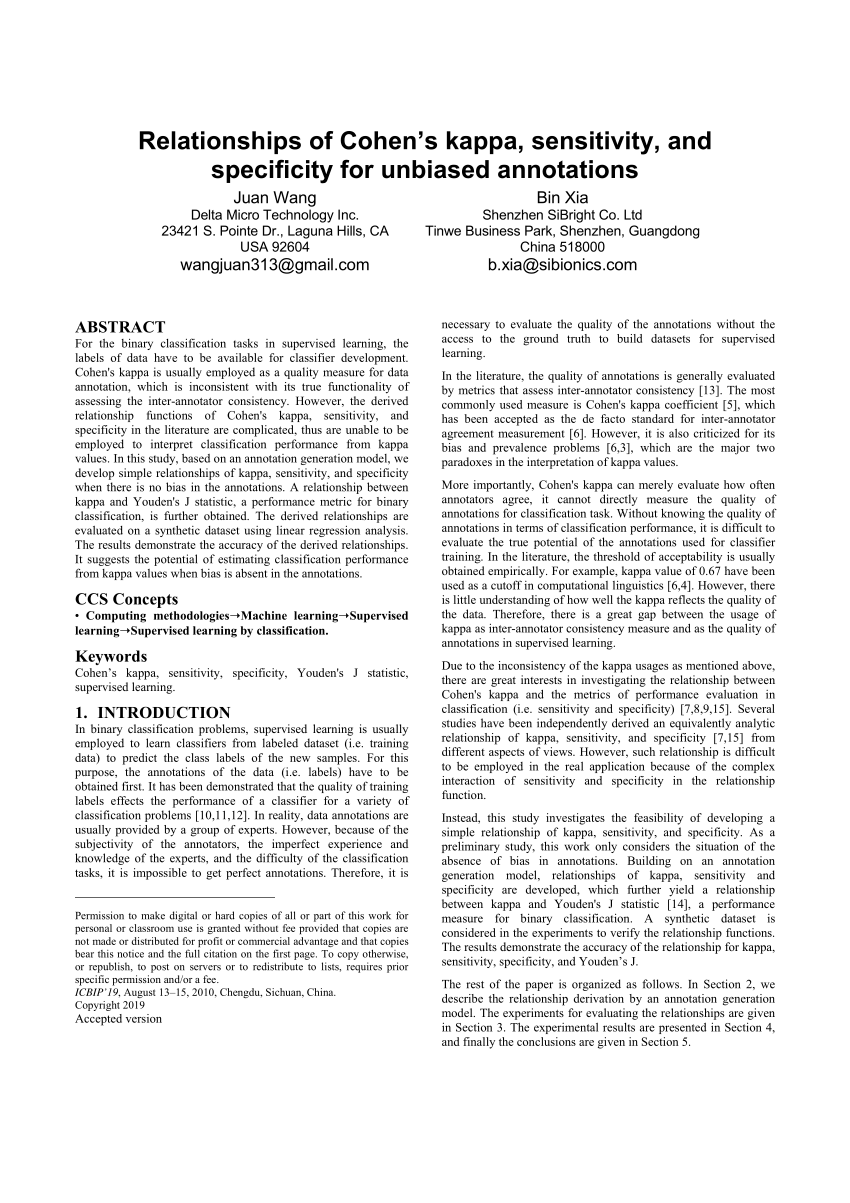

PDF) Explaining the unsuitability of the kappa coefficient in the assessment and comparison of the accuracy of thematic maps obtained by image classification (2020) | Giles M. Foody | 87 Citations

![PDF] More than Just the Kappa Coefficient: A Program to Fully Characterize Inter-Rater Reliability between Two Raters | Semantic Scholar PDF] More than Just the Kappa Coefficient: A Program to Fully Characterize Inter-Rater Reliability between Two Raters | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/79de97d630ca1ed5b1b529d107b8bb005b2a066b/2-Figure2-1.png)

PDF] More than Just the Kappa Coefficient: A Program to Fully Characterize Inter-Rater Reliability between Two Raters | Semantic Scholar

SciELO - Brasil - Confiabilidade interobservadores na classificação de pares formados no relacionamento probabilístico entre bases de dados do SISMAMA Confiabilidade interobservadores na classificação de pares formados no relacionamento probabilístico ...

REPRODUCTIBILITAT INTRAOBSERVADOR EN LA TESI DOCTORAL ANÀLISI METODOLÒGIC DELS ESTUDIS DE CONCORDANÇA INTEROBSERVADOR I CARAC

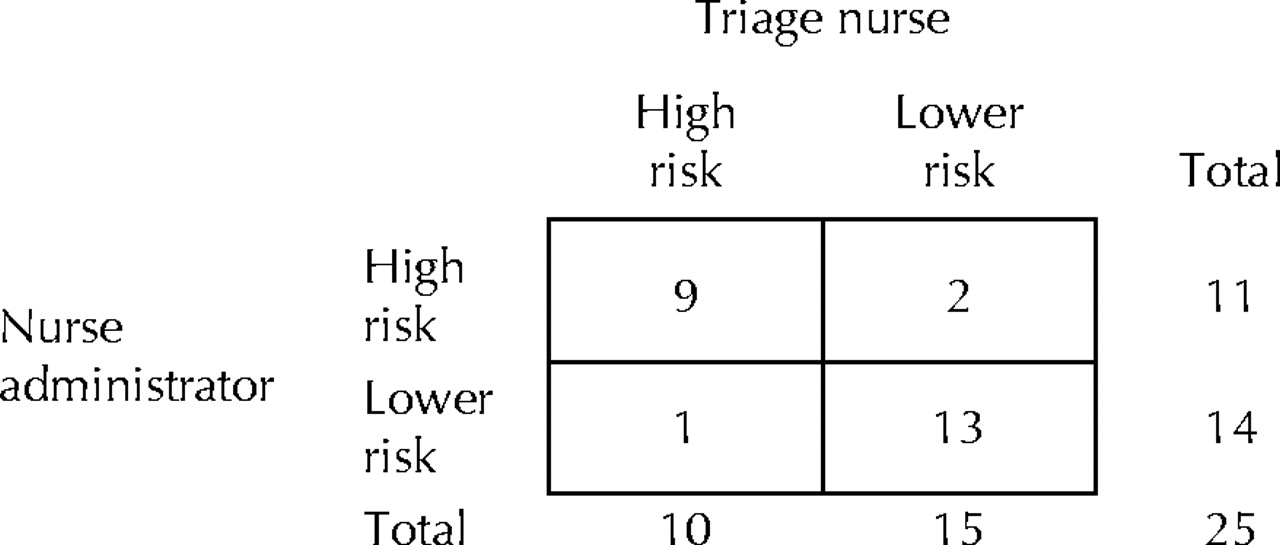

![PDF] The kappa statistic in reliability studies: use, interpretation, and sample size requirements. | Semantic Scholar PDF] The kappa statistic in reliability studies: use, interpretation, and sample size requirements. | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/6d3768fde2a9dbf78644f0a817d4470c836e60b7/4-Table2-1.png)

PDF] The kappa statistic in reliability studies: use, interpretation, and sample size requirements. | Semantic Scholar

![PDF) [Reliability and validity of a generic job exposure matrix applied on a small-business] PDF) [Reliability and validity of a generic job exposure matrix applied on a small-business]](https://i1.rgstatic.net/publication/6147747_Reliability_and_validity_of_a_generic_job_exposure_matrix_applied_on_a_small-business/links/00b49539129e3c67d7000000/largepreview.png)